This is something I had wanted to do for a while. Finally did welcome Charles Joy, a Principal Program Manager on the Azure Stack team, back to the show and we discussed the recent release of Microsoft Azure Stack Technical Preview 1. It’s a fun episode. Enjoy it.

Category Archives: private cloud

(Part 2) Deploying Windows 10: How to Migrate Users and User Data to Windows 10

Yung Chou, Kevin Remde and Dan Stolts continue their TechNet Radio multi-part Windows 10 series and in part 2 they showcase free tools like the User State Migration Toolkit (USMT) that can easily migrate users and user data to Windows 10 from Windows XP, 7 and 8.

IT Pros’ Job Interview Cheat Sheet of Multi-Factor Authentication (MFA)

Internet Climate

Recently, as hacking has become a business model and identity theft an everyday phenomenon, there is increasing hostility in Internet and an escalating concerns for PC and network securities. No longer is a long and complex password sufficient to protect your assets. In addition to a strong password policy, adding MFA is now a baseline defense to better ensure the authenticity of an examined user and an effective vehicle to deter fraud.

Market Dynamics

Furthermore, the increasing online ecommerce transactions, the compliance needs of regulated verticals like financial and healthcare, the unique business requirements of market segments like the gaming industry, the popularity of smartphones, the adoption of cloud identity services with MFA technology, etc. all contribute to the growth of MFA market. Some market research published in August of 2015 reported that “The global multi-factor authentication (MFA) market was valued at USD 3.60 Billion in 2014 and is expected to reach USD 9.60 Billion by 2020, at an estimated CAGR of 17.7% from 2015 to 2020.”

Strategic Move

While mobility becomes part of the essential business operating platform, a cloud-based authentication solution offers more flexibility and long-term benefits.The is apparent The street stated that

“Availability of cloud-based multi-factor authentication technology has reduced the maintenance costs typically associated with hardware and software-based two-factor and three-factor authentication models. Companies now prefer adopting cloud-based authentication solutions because the pay per use model is more cost effective, and they offer improved reliability and scalability, ease of installation and upgrades, and minimal maintenance costs. Vendors are introducing unified platforms that provide both hardware and software authentication solutions. These unified platforms are helping authentication vendors reduce costs since they need not maintain separate platforms and modules.”

Disincentives

Depending on where IT is and where IT wants to to be, the initial investment may be consequential and significant. Adopting various technologies and cloud computing may be necessary, while facing resistance to change in corporate IT cultural.

Snapshot

The following is not an exhaustive list, but some important facts, capabilities and considerations of Windows MFA.

Closing Thoughts

MFA helps ensure the authenticity of a user. MFA by itself nevertheless cannot stop identity theft since there are various ways like key logger, phishing, etc. to steal identity. Still, as hacking has become a business model for some underground industry, and even a military offense, and credential theft has been developed as a hacking practice, it is not an option to operate without a strong authentication scheme. MFA remains arguably a direct and effective way to deter identity theft and fraud.

And the emerging trend of employing biometrics, instead of a password, with a key-based credential leveraging hardware and virtualization-based security like Device Guard and Credential Guard in Windows 10 further minimizes the attack surface by ensuring hardware boot integrity and OS code integrity, and allowing only trusted system applications to request for a credential. Device Guard and Credential Guard together offers a new standard in preventing PtH which is one of the most popular types of credential theft and reuse attacks seen by Microsoft so far.

Above all, going forward we must not consider MFA as an afterthought and add-on, but an immediate and imperative need of a PC security solution. IT needs to implement MFA sooner than later, if not already.

Moving Data In, Out and Between Azure Storage Accounts Using AzCopy

Windows Azure Pack Express Installation

This is a project for Microsoft Virtual Academy that I had the pleasure to work with Shri (Shriram Natarajan, a Program Manager in Windows Azure Pack team) and had a wonderful time and learned much from him.

Windows Azure Pack, one of my favorite subjects on transforming your private cloud into a customer-centric IT as a service hub. The idea is to offer customers a solution platform such that they can self-serve on consuming, establishing, and managing IT capabilities including network, storage, and compute on demand regardless if resources are on-premises, deployed in Azure, or hosted in a 3rd party facility. The enabler, Windows Azure Pack, places an abstraction to present VMM-based private cloud with a Azure-like interface and experience, while integrating and consolidating at the middleware layer to enable on-premises, Azure, and 3rd-party resources to be managed with a consistent experience.

The first step in this strategic approach is to experience and asses Windows Azure Pack relevant to your unique IT environment. Which is what this project is about.

Windows Server 2012 R2 Hyper-V Replica Configuration, Simulation and Verification Explained

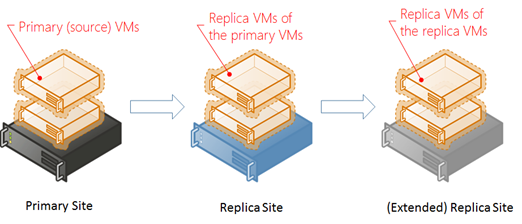

Windows Server 2012 R2 Hyper-V Role introduces a new capability, Hyper-V Replica, as a built-in replication mechanism at a virtual machine (VM) level. Hyper-V Replica can asynchronously replicate a selected VM running at a primary (or source) site to a designated replica (or target) site across LAN/WAN. The following schematic depicts this concept.

A replication process will replicate, i.e. create an identical VM in the Hyper-V Manager of a target replica server and subsequently the change tracking module of Hyper-V Replica will track and replicate the write-operations in the source VM every based on a set interval after the last successful replication regardless the associated vhd files are hosted in SMB shares, Cluster Shared Volumes (CSVs), SANs, or with directly attached storage devices.

Hyper-V Replica Requirements

Above both a primary site and a replica site are Windows Server 2012 R2 Hyper-V hosts where the former runs production or the so-called primary VMs, while the latter hosts replicated ones which are off and each is to be brought online, should a corresponding primary VM experience an outage. Hyper-V Replica requires neither shared storage, nor a specific storage hardware. Once an initial copy is replicated to a replica site, Hyper-V Replica will replicate only the changes of a configured primary VM, i.e. the deltas, asynchronously.

It goes without saying that assessing business needs and developing a plan on what to replicate and where is essential. In addition, both a primary site and a replica site (i.e. Hyper-V hosts run source and replica VMs, respectively) must enable Hyper-V Replica in the Hyper-V Settings of Hyper-V Manager. IP connectivity is assumed when applicable. Notice that Hyper-V Replica is a server capability and not applicable to client Hyper-V such as running Hyper-V in a Windows 8 client.

Establishing Hyper-V Replica Infrastructure

Once all the requirements are put in place, enable Hyper-V Replica on both a primary site (i.e. a Windows Server 2012 R2 Hyper-V host where a source VM runs) a target replica server, followed by configure an intended VM at a primary site for replication. The following is a sample process to establish Hyper-V Replica.

Step 1 – Enable Hyper-V Replica

Identify a Windows Server 2012 R2 Hyper-V host as a primary site where a target source VM runs. Enable Hyper-V Replica in the Hyper-V settings in Hyper-V Manager of the host. The following are Hyper-V Replica sample settings of a primary site. Repeat the step on a target replica site to enable Hyper-V Replica. In this article, DEVELOPMENT and VDI are both Windows Server 2012 R2 Hyper-V hosts where the former is a primary site while the latter is the replica site.

Step 2 – Configure Hyper-V Replica on Source VMs

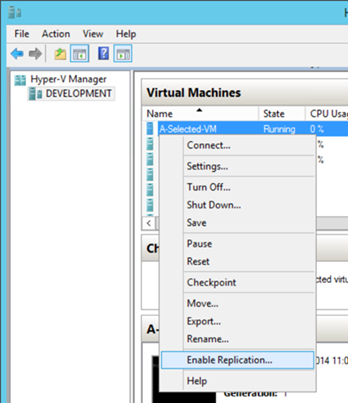

Using the Hyper-V Manager of a primary site, enable Hyper-V Replica of a VM by right-clicking the VM and select the option, followed by walking through the wizard to establish a replication relationship with a target replica site. Below shows how to enable Hyper-V Replica of a VM, named A-Selected-VM, at the primary site, DEVELOPMENT. The replication settings are fairly straightforward and for a reader to get familiar with.

Step 3 – Carry Out an Initial Replication

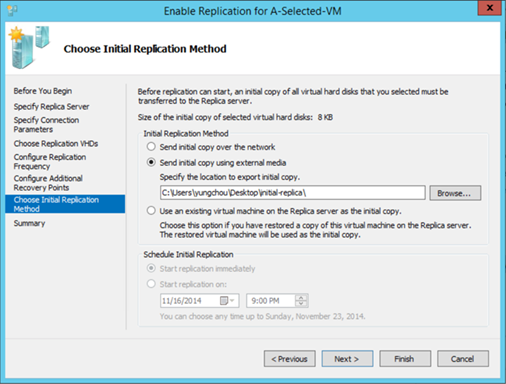

While configuring Hyper-V Replica of a source VM in step 2, there are options to deliver an initial replica. If an initial replica is to be transmitted over the network, which can happen in real-time or according to a configured schedule. Optionally an initial copy can also be delivered out of band, i.e. with external media, while sending an initial copy on wire may overwhelm the network, not be reliable, take too long to do it or not be a preferred option due to the sensitivity of content. There are however additional considerations with out-of-band deliver of an initial replica.

Option to Send Initial Replica Out of Band

The following shows the option to send initial copy using external media while enabling Hyper-V Replica on a VM named A-Selected-VM.

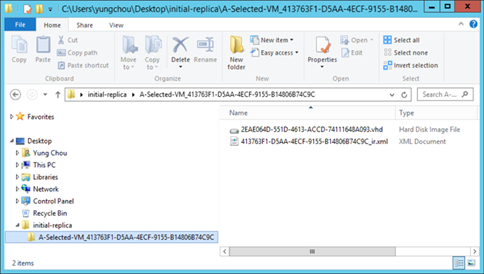

An exported initial copy includes the associated VHD disk and an XML file capturing the configuration information as depicted below. This folder can then be delivered out of band to the target replica server and imported into the replica VM.

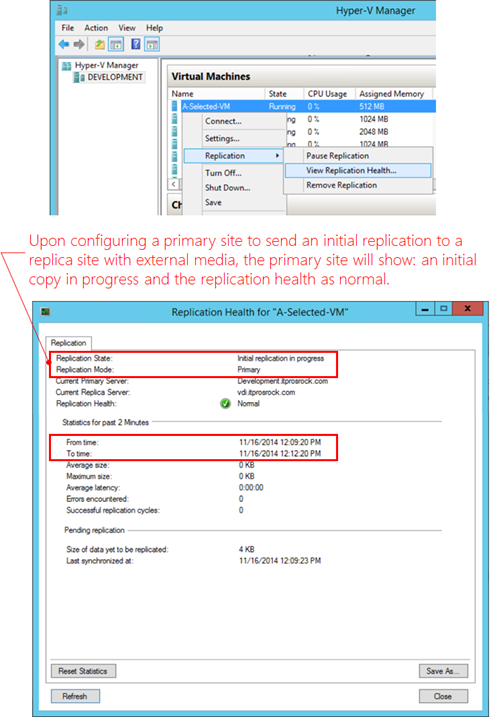

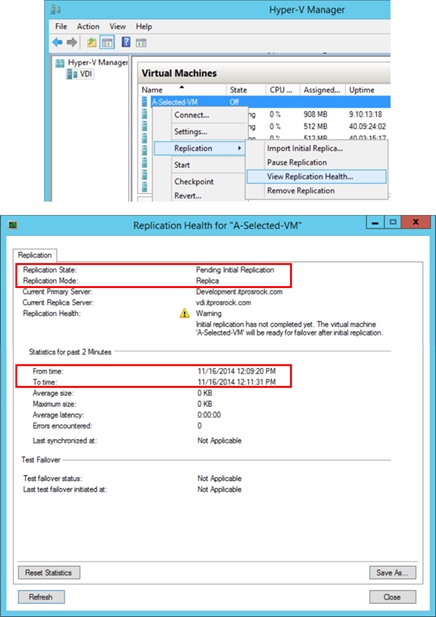

When delivering an initial copy with external media, a replica VM configuration remains automatically created at an intended replica site exactly the same with the user experience of sending a replica VM online. The difference is that a source VM (here the VM on the primary site, DEVELOPMENT) shows an initial replication in progress in replication health. The replica VM (here the VM on the replica site, VDI) has an option to import an initial replica in replica settings while the replication health information is presenting a warning as shown below:

The primary site

The replica site

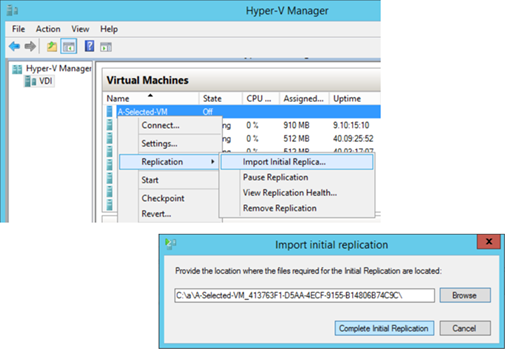

After receiving the initial replica with external media, the replica VM can then import the disk a shown:

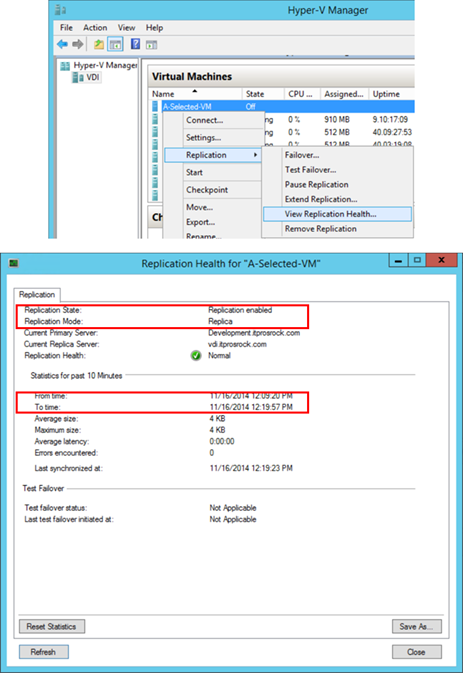

and upon successfully importing the disk, the following shows the replica VM now with additional replication options and updated replication health information.

Step 4 – Simulate a Failover from a Primary Site

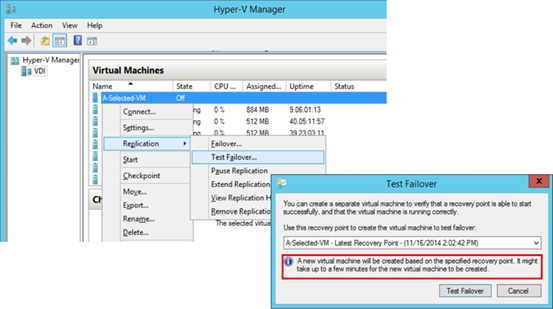

This is done at an associated replica site. First verify and ensure the replication health on a target replica VM is normal. Then right-click the replica VM and select Test Failover in Replication settings as shown here:

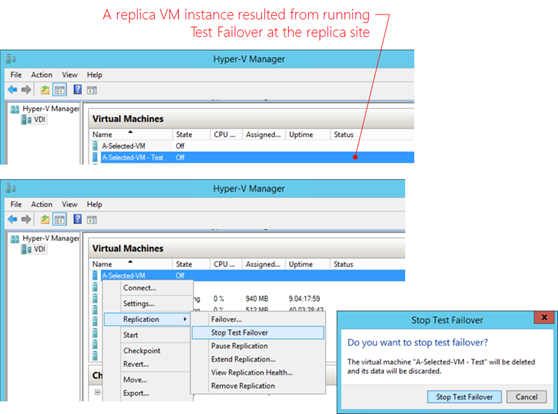

Notice supposedly a failover should occur only when the primary VM experience outage. Nevertheless a simulated failover does not require shutting down a primary/source VM. It does create a VM instance after all at the replica site without altering the replica settings in place. A newly created VM instance resulted from a Test Failover should be deleted via the option, Stop Test Failover, via the replication UI where the Test Failover was originally initiated, as demonstrated below, to ensure all replication settings remain validated.

Step 5 – Do a Planned Failover from the Primary Site

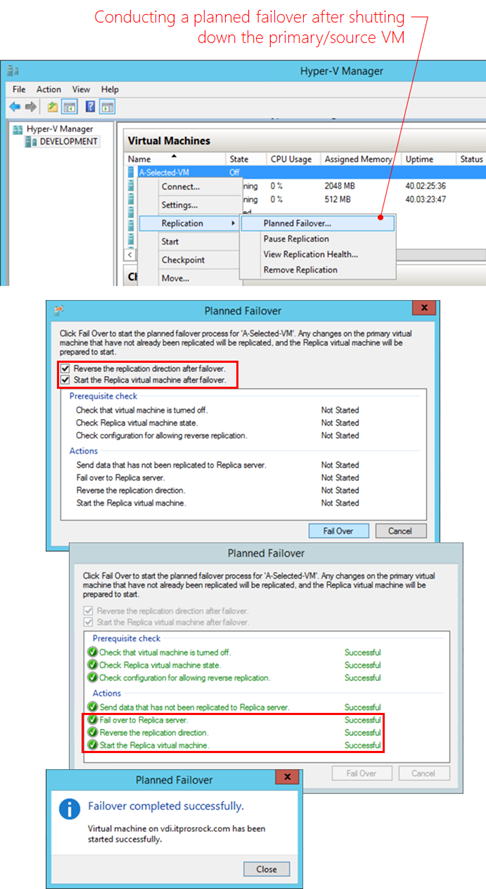

In a maintenance event where a primary/source is expecting an outage, ensure the replication health is normal followed by conducting a Planned Failover from the primary site after shutting down the source VM as shown below.

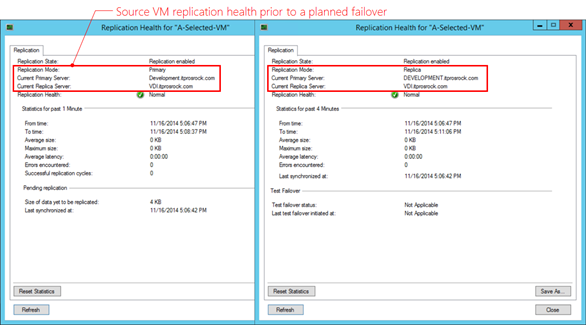

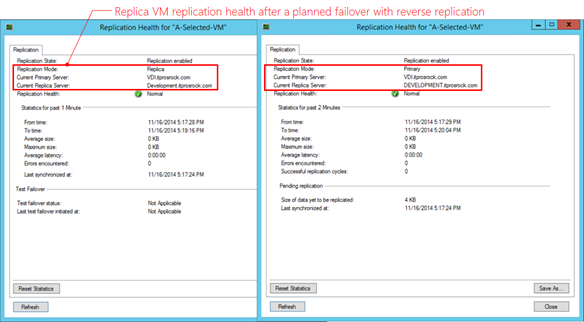

Notice that successfully performing a Planned Failover with the presented settings will automatically establish a reverse replication relationship. To verify the failover has been correctly carried out, check the replication health of a replication pair of VMs on both the primary site and the replica site, prior and after a planned failover. The results need to be consistent across the board. The following examines a replication pair: A-Selected-VM on DEVELOPMENT at a primary site and the replica on VDI at the replica site. Prior to a planned failover from DEVELOPMENT, the replication health information of a source VM (left and at DEVELOPMENT server) and the replica VM (right and at VDI server) is as shown below.

After a successful planned failover event as demonstrated at the beginning of this step, the replication heath information becomes the following where the replication roles had been reversed and with a normal state. This signifies the planned failover was carried out successfully.

In the event that a source VM experiences an unexpected outage at a primary site, failing over a primary VM to a replica one in this scenario will not be able to automatically establish a reverse replication due to un-replicated changes have already lost along with the unexpected outage.

Step 6 – Finalize the Settings with Another Planned Failover

Conduct another Planned Failover event to confirm that a reverse replication works. In the presented scenario, the planned failover of a primary VM will be now shutting down in the primary site (i.e VDI at this time), followed by failing over back to the replica site (i.e. DEVELOPMENT at this time). And upon successful execution of the planned failover event, the resulted replication relationship should be DEVELOPMENT as a primary site with VDI as the replica site again. Which is the same state at the beginning of step 5. At this time, in a DR scenario, failing production site (in the above example DEVELOPMENT server) over to a DR site (here VDI server), and as needed failing over from the DR site (VDI server) back to the original production site (DEVELOPMENT server) are proven working bi-directionally.

Step 7 – Incorporate Hyper-V Replica into Existing SOPs

Incorporate Hyper-V Replica configurations and maintenance into applicable IT standard operating procedures and start monitoring and maintaining the health of Hyper-V Replica resources.

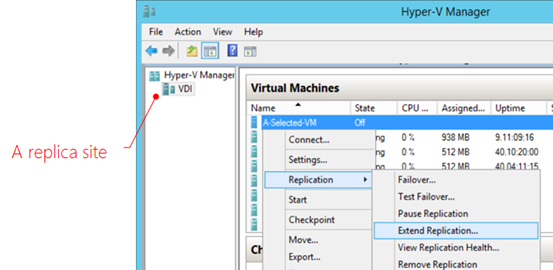

Hyper-V Extended Replication

In Windows Server 2012 R2, we can now further replicate a replica VM form a replica site to an extended replica site, similar to a backup’s backup. The concept as illustrated below is quite simple.

The process is to first set up Hyper-V Replica from a primary site to a target replica site as described earlier in this article. Then at the replica site, simply configure Hyper-V Replica of a replica VM, as shown below. In this way, a source VM is replicated from a primary site to a replica site which then replicates the replica VM from the replica site to an extended replica site.

In a real-world setting, Hyper-V Extended Replication fits business needs. For instance, IT may want a replica site nearby for convenience and timely response in an event that monitored VMs are experience some localized outage within IT’s control, while the datacenter remains up and running. While in an outage causing an entire datacenter or geo-region to go down, an extended replication stored in a geo-distant location is pertinent.

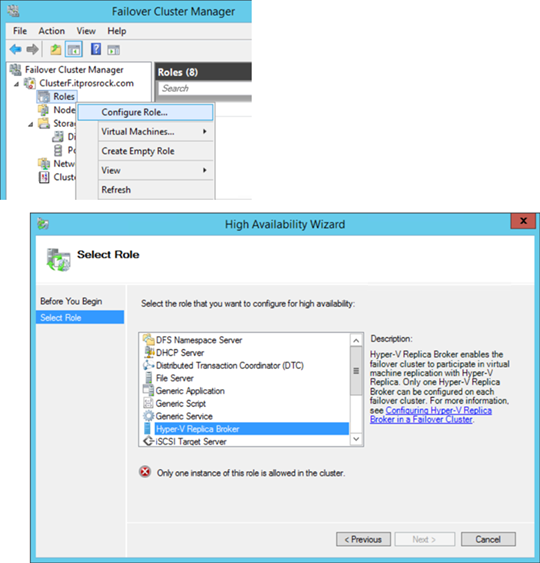

Hyper-V Replica Broker

Importantly, if to employ a Hyper-v failover cluster as a replica site, one must use Failover Cluster Manager to perform all Hyper-V Replica configurations and management by first creating a Hyper-V Replica Broker role, as demonstrated below.

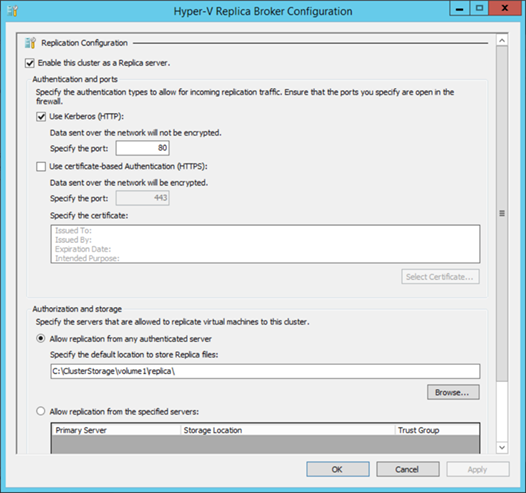

And here is a sample configuration:

Although the above uses Kerberos authentication, as far as Hyper-V Replica is concerned Windows Active Directory domain is not a requirement. Hyper-V Replica can also be implemented between workgroups and untrusted domains with a certificate-based authentication. Active Directory is however a requirement if involving a Hyper-v host which is part of a failover cluster as in the case of Hyper-V Replica Broker, and in such case all Hyper-V hosts of a failover cluster must be in the same Active Directory domain.

Business Continuity and Disaster Recovery (BCDR)

In a BC scenario and a planned failover event, for example a scheduled maintenance, of a primary VM, Hyper-V Replica will first copy any un-replicated changes to the replica VM, so that the event produces no loss of data. Once the planned failover is completed, the VM replica will then become the primary VM and carry the workload, while a reverse replication is automatically set. In a DR scenario, i.e. an unplanned outage of a primary VM, an operator will need to manually bring up the replicated VM with an expectation of some data loss, specifically data change since the last successfully replication based on the set replication interval.

In a BC scenario and a planned failover event, for example a scheduled maintenance, of a primary VM, Hyper-V Replica will first copy any un-replicated changes to the replica VM, so that the event produces no loss of data. Once the planned failover is completed, the VM replica will then become the primary VM and carry the workload, while a reverse replication is automatically set. In a DR scenario, i.e. an unplanned outage of a primary VM, an operator will need to manually bring up the replicated VM with an expectation of some data loss, specifically data change since the last successfully replication based on the set replication interval.

Closing Thoughts

The significance of Hyper-V Replica is not only the simplicity to understand and operate, but the readiness and affordability that comes with Windows Server 2012 R2. A DR solution for business in any sizes is now a reality with Windows Server 2012 R2 Hyper-V. For small and medium businesses, never at a time is a DR solution so feasible. IT pros can now configure, simulate and verify results in a productive and on-demand manner to formulate/prototype/pilot a DR solution at a VM level based on Windows Server 2012 R2 Hyper-V infrastructure. At the same time, in an enterprise setting, System Center family remains the strategic platform to maximize the benefits of Windows Server 2012 R2 and Hyper-V with a comprehensive system management solution including private cloud automation, process orchestration, services deployment and management at a datacenter level.

Additional Information

My presentation at IT Camp: Modernizing Your Infrastructure

Automating and Managing Hybrid Cloud Environment

In part 5 of our “Modernizing Your Infrastructure with Hybrid Cloud” series, Keith Mayer and I got a chance to discuss and demonstrate ways to manage and automate a hybrid cloud environment. System Center, Microsoft Azure and Windows Azure Pack combined with PowerShell are great solutions for hybrid cloud scenarios. Keith is a great guy and we always have much fun working together.

- [1:15] When architecting a Hybrid Cloud infrastructure, what are some of the important considerations relating to management and automation?

- [4:09] You mentioned PowerShell for automation … how can PowerShell be leveraged for automation in a Hybrid Cloud?

- [7:54] Is PowerShell my ONLY choice? Are there other automation and configuration management solutions available for a Hybrid Cloud?

- [11:12] DEMO: Let’s see some of this in action

- Brief tour of System Center and Azure / Azure Pack management portal interfaces

- Getting started with PowerShell for Azure, Azure Pack automation

- Intro to PowerShell DSC for configuration management

- Intro to Azure Automation for automated runbooks

Additional resources:

Windows Azure Pack (WAP) simplified: Prepping OS Image Disks for Gallery Items

To publish a gallery item in Windows Azure Pack (WAP), the associated OS image disks, i.e. vhd files, must be set according to what’s in the readme file of a gallery resource package. For those who are not familiar with the operations, this can be a frustrating learning experience before finally getting it right. This blog post is to address this concern by presenting a routine with a sample PowerShell script [download] to facilitate the process.

Required Values of OS Image Disks for WAP Gallery Items

For example, below is from the readme file of the gallery resource package, Windows Server 2012 R2 VM Role. It lists out specific property values for WAP to recognize a vhd as an applicable OS image disk for the Role. To find out more about WAP gallery resources, the information is available at http://aka.ms/WAPGalleryResource.

As a gallery item introduced into vmm and WAP, the item then becomes available when a tenant is provisioning a Role as shown below.

There are several steps involved including:

- Prepping vhds of and importing resource extension of the gallery item, as applicable, to vmm server library shares

- Importing resource definition to WAP

Here, prepping vhds is the focus. And the process and operations are rather mechanical as detailed in the following.

Process and Operations

The script below illustrates a routine for a vmm administrator to set required property values on applicable OS image disks in a target vmm server’s library shares, . This sample script is available for download.

Line 23 connects to a target vmm server.

Line 25 builds a list of vhd objects the prefix, ws2012r2, in their names. Which suggests a vmm administrator to develop a meaningful naming scheme for referenced resources.

Line 27 and 28 display settings of the vhd files before making changes.

Line 30 to line 35 are to set the values to specific fields including OS, familyname and release according to the readme file of a particular gallery resource package, for example, WS2012_R2_WG_VMRole_Pkg. And as preferred, one can also default a product key to a vhd.

The foreach loop goes through each vhd in the list and set the values. WAP references the tag values of a vhd file to realize if a vhd is applicable for various workloads. Make sure to add all tag values specified in the readme file, as demonstrated between line 41 and line 44 to build the list. Line 46 to line 52 sets all specified values to corresponding property fields of a currently referenced vhd file.

Finally upon finishing the foreach loop, line 56 and line 57 present the updated settings of the processed vhd files for verification.

User Experience

Here’s an example of running the script:

And with a vmm admin console of the target server, go to Library workspace and right-click an updated vhd disk to verify the property values are correctly set, as shown below.

At this time, with correctly populated property values and tags, the vhds are ready for this particular WAP gallery item, Windows Server 2012 R2 VM Role.

For all the gallery items which WAP displays, a vmm administrator must reference the readme file of each gallery resource package and carry out the above exercise to set property values of the applicable OS image disks. Pay attention to the tags. Missing a tag may invalidate an image disk for some workload and inadvertently prevent that workload from being available for a tenant to provision an associated VM Role in WAP, despite the OS is properly installed on the disk.

Closing Thoughts

The tasks of prepping OS imaging disks for WAP gallery items are simple and predictable. Each step is however critical for successfully publishing an associated gallery item in WAP. Like many mechanics, understand the routine, practice, and practice more. A vmm administrator needs to perform these operations with confidence and precision. The alternative is needless frustration and delay, while both are absolutely avoidable at this juncture of deploying WAP.

What Is and What Is Not Cloud Computing and Why Do I as an IT Pro Care

This was a presentation which I delivered at Stratford University in PDF format. My focus was on bringing clarity of cloud computing to the audiences so we can better articulate the business values of cloud computing and why cloud is or perhaps is not the solution for a business problem.

Additional resources is available at http://aka.ms/selected.